Increasing Ubiquity

The consequence of self-reproduction in life, as well as in the technium, is an inherent drive toward ubiquity. Given enough resources, duplication of one type will keep going until all its construction resources are consumed. All things being equal, dandelions, or raccoons, or asphalt will replicate till they cover the earth. Evolution equips a replicant with tricks to maximize its spread no matter the constraints. But because physical resources are limited, and competition relentless, no species can ever reach full ubiquity. Yet all life is biased in that direction. Technology, too, wants to be ubiquitous.

Humans are the reproductive organs of technology. We multiply manufactured artifacts and spread ideas and memes. Because humans are limited (only 6 billion alive at the moment) and there are tens of millions of species of technology or memes to spread, none can reach full 100% ubiquity, although several come close.

Nor do we really want all technology to be ubiquitous. It would be best for ourselves if remedial technology like artificial hearts never became very common. Preferably, we would engineer away the need for replacement hearts through genetics or drugs or diet. In the same way, the remedial technology of carbon sequestration (removing carbon from the atmosphere) would ideally never become ubiquitous. Best would be an energy system based photons (solar), fusion (nuclear), wind, or very least, burning hydrogen rather than burning carbon. The spread of fuels relying on zero carbon, or little carbon (wood, coal, oil and gas have a ascending percent of hydrogen per carbon in that order) would thus negate the spread of carbon sequestration technology. Thus rival technologies keep themselves in check.

Individual species of technology, like species of weeds, tend to multiply towards ubiquity to fill their available niche. But a technium packed with remedial technologies does not have a long-term trajectory, just as an ecosystem composed only of weeds will not survive as long as one with less opportunistic components. Artificial hearts do not offer as many long-term options to a person, or society, as does a natural heart kept healthy by other technologies. Remedial atmospheric solutions do not offer as many future options as superior energy sources. The niche for replacement hearts, cataract surgery, pollution reducers, data recovery, and so on are in the long run – at civilization scale – narrow places for ubiquity. Once their niches are filled, they lead no where else. They are stop gap and self-limiting. Like a small pox vaccine. Ideally a vaccine has no future if it is universally successful.

Rather than self-limits the technium favors the type of ubiquity found in open-ended technologies, that is, those technologies that effectively increase the arrival of other effective open-ended technologies. This expansion unleashes cascades of other technologies that spread pervasively.

From a planetary biosphere perspective the most ubiquitous technology on Earth is agriculture. The steady surplus of high quality food from agriculture is vigorously open-ended in that this abundance enabled civilization and birthed its millions of technologies. The spread of agriculture is the largest-scale engineering project on the planet. Nearly half of Earth’s land surface has been altered by the mind and hand of humans. Native plants have been displaced, soil moved, and domesticated crops planted in their stead. Great stretches of Earth’s surface have been semi-domesticated into pasture land. The most drastic of these changes – such as uninterrupted tracts of giant farms — are visible from space. Measured in number of square kilometers, the most ubiquitous technology on the planet are the five major domesticated crops of maize, wheat, rice, cane sugar and cows.

Other more subtle technological alterations are visible in the ecological history of a place. By many experts’ account, there have not been any wilderness areas on this planet for perhaps five thousand years. Most of the areas we ordinarily consider wild (like the Amazon or the Congo, or the American West) are in fact the result of thousands of years of human intervention. By setting seasonal fires, by selectively hunting certain species, or by selectively harvesting certain plants, tribal people groom the landscape for food production over the centuries. No territory on the planet has completely escaped the inquisitive and disruptive impulses of the human mind to tame the environment. Hunter/gatherers now live or have lived everywhere (except for some Antarctic areas) and wherever people dwell, they use technology to modify the “natural” ecology and terraform their continent.

The third most ubiquitous planetary technology are roads. Simple clearings for the most part, dirt roads extend their root-like tentacles into most watersheds, criss-crossing valleys and winding their way up many mountains. The web of constructed roads forms a reticulated cloak around the continents of this planet. A string of buildings follow along the dendritic branches of roads. These nodes are made of cut tree fiber (wood, thatch, bamboo) or molded earth (adobe, brick, stone, concrete) and may be fourth commonest technology.

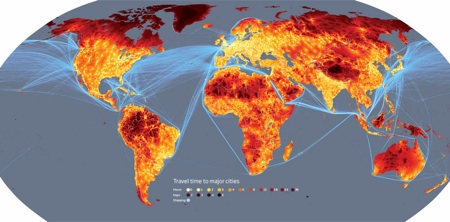

This map of the world shows travel time to major cities, closer is lighter, farther is darker. In essence it is a map of the global road network. (via New Scientist)

Not as visible, but perhaps more pervasive at the planetary level, are the technologies of fire. Controlled burning of carbon fuels, particularly mined coal and oil, has led to changes in the Earth’s atmosphere. Reckoned in total mass and converge, these furnaces (which often travel along the roads as engines in automobiles) are dwarfed by roads. Though smaller in scale than the roads they ride on, or the homes and factories they burn in, these tiny deliberate fires are able to shift the composition of the globe’s voluminous atmosphere. It is possible that this collective burning may be the largest-scale technological impact on the planet.

While magnificent stone and silica cities and their sprawl symbolize our technium, they are far from ubiquitous. Their footprint is small compared to agriculture, but megalopolis have rerouted the flow of materials so that much of the technium circulates through them. Rivers of food and raw materials flow in, and debris flow out. Every person living in a developed country in moves 20 tons of material annually.

Then there are the things we surround ourselves with. From the perspective of daily modern human life, the list of near-ubiquitous technologies include cotton cloth, iron blades, plastic bottles, paper, and radio signals. These five technological species are within reach of nearly every human alive today, both in the cities and in the most remote rural villages. Each of these technologies open up vast new territories of possibilities: paper — cheap writing, printing, and money; metal blades — art, craft, gardening, and butchering; plastic — cooking, water, and medicines; radio –- connection, news, and community. Fast on their tracks follow the nearly ubiquitous species of metal pots, matches, and cell phones.

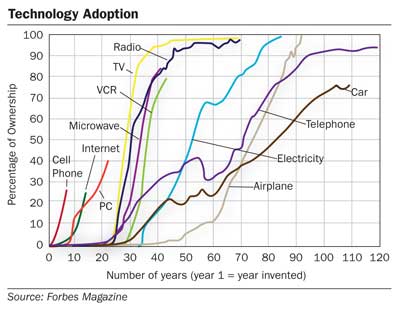

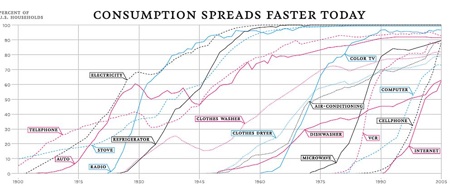

Total ubiquity is the end point all technologies tend toward but never reach. But there is a practical ubiquity of near saturation, which is sufficient to flip the dynamic of a technology onto another level. In the developed world and urban places everywhere, the speed at which new technologies disperse to the point of saturation has been increasing.

Whereas it took electrification 45 years to reach 90% of US residents, it’s taken only 20 years for cell phones to reach the same penetration. The rate of diffusion is accelerating. A straight line extrapolation would suggest that the rate of technological adoption should continue to accelerate until it occurs instantaneously. By the year 2100, a personal teleporter, say, should be adopted by everyone alive the year it is introduced. A new immersive VR suit the day after it is released. And a new wireless wearable communicator the hour after it is invented.

Rates of diffusion of consumer technology (via NYTimes)

However that scenario is unlikely to happen because technology specializes as fast as it becomes common, so most technology will not be adopted by most people. In fact the more complex the technology, the less likely it will reach near-ubiquity. The peak global penetration for the average technological innovation will drop over time. We can see a hint of that in the chart above. The level of peak penetration at which diffusion plateaus is falling over time. Any particular new species of communication device in the next century is unlikely to every reach the same ubiquity as machine-woven cotton cloth, or even the television.

But something strange happens with ubiquity. More is different. A few automobiles roaming along a few roads is fundamentally different than a few automobiles for every person. And not just because of the increased noise and pollution. A billion operating cars spawn an emergent system that creates its own dynamics. Ditto for most inventions. The first few cameras were a novelty. Their impact was primarily to put painters out of the job of recording the times. But as photography became easier to use, common cameras led to intense photojournalism, and eventually they hatched movies and Hollywood alternative realities. The further diffusion of cameras cheap enough that every family had one in turn fed tourism, globalism and international travel. The further diffusion of cameras into cell phones and digital devices birthed a universal sharing of images, the acceptance that something was not real until it was captured in a camera, and a sense that there is no significance outside of the camera view. The further diffusion of cameras embedded into the built environment, peeking from every city corner and peering down from every room ceiling forces a transparency upon society. Eventually every surface of the built world will be covered with a screen and every screen will double as an eye. When the camera is fully ubiquitous everything is recorded for all time. We have a communal awareness and memory. That’s a long way from simply displacing painting.

I met a fellow many years ago who spent ten years wearing a tiny camera in front of his left eye. This head-mounted camera captured everything that happened in his life and transmitted it back to his website. When Steve Mann started his experiment of recording and broadcasting his life as a grad student, he was a lone eccentric. While he was standing there talking to you, with one eye open and the other filming, his unconventional approach to documentation seemed like performance art. One could not really object to it, because, well, he was such an outlier.

In the course of his years of living ordinary life as a one-eyed camera, going shopping, to school, to events with his friends, Mann discovered that ironically the more surveillance cameras a particular store, plaza, or gathering place had, the more their guards objected to individuals like him recording their own view. The watchers hated to be watched. Mann calls his inverse surveillance, sousveillance, a word coined by replacing the French “sur” for above, with the French “sous” for below, as in watching from the bottom up.

After he graduated from MIT, Mann became a professor and his grad students used the next generation of smaller circuitry to craft their own miniature sousveillance gear. Some were tiny enough to fit unobtrusively into sunglasses. The students would record each other. In the meantime, cell phones sprouted hi-res cameras and video cams connected to the net, which performed the same sousviellance actions. Suddenly, there were millions of public eyes watching each other. Sousveillance had gone from a node of one to near ubiquity. A few years ago when all this sousveillance was new, a girl on a Korean subway let her dog crap on the floor without cleaning up the mess. Her transgression was captured by several sousveillance phonecams and eventually broadcasted on national TV. She was shamed into apology by a new ubiquity.

One thousand live cameras always-on make downtowns safe from pickpockets, nab stop-light speeders, and record police misbehavior. One billion live cameras always-on serve as a community monitor and memory; they give the job of eyewitness to amateurs; they restructure the notion of the self, and a billion cameras demote the authority of authorities.

One thousand automobiles opens up mobility, creates privacy, supplies adventure. One billion automobiles creates suburbia, eliminates adventure, erases parochial minds, triggers parking problems, births traffic jams, and removes the human scale of architecture.

One thousand teleportation stations rejuvenate vacation travel. One billion teleportation stations overturn commutes, enhance globalism, introduce tele-lag sickness, re-introduce the grand spectacle, kill the nation state, and end privacy.

One thousand human genetic sequences jump-start personalized medicine. One billion genetic sequences every hour enable real-time genetic damage monitoring, upend the chemical industry, redefine illness, make genealogies relevant, unravel the packaging industry and launches “ultra-clean” lifestyles that make organic look filthy.

One thousand screens the size of buildings keep Hollywood going. One billion screens everywhere become the new art, create a new advertising media, vitalize cities at night, accelerate locative computing, and rejuvenate the commons.

One thousand humanoid robots revamp the olympics, and give a boost to entertainment companies. One billion humanoid robots cause massive shifts in employment, reintroduces slavery and its opponents, and demolishes the status of established religions.

In the course of evolution every technology is put to the question of what happens when it becomes ubiquitous? What happens when everyone has one?

Usually it disappears. Electric motors, born large, rare and obvious, quickly became invisible and everywhere. Shortly after their invention in 1873 modern electric motors propagated throughout the manufacturing industry. Each factory stationed one very large expensive motor in the place where a steam engine formerly stood. That single engine turned a complex maze of axles and belts, which in turn spun hundreds of smaller machines scattered throughout the factory. The rotational energy twirled through the buildings from that single source.

Machinery for grinding crankshafts at the Ford Motor Company, 1915. (From Hounshell)

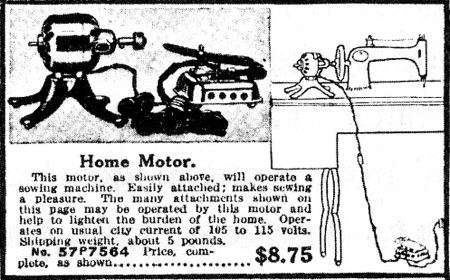

By the 1910s electric motors started their inevitable spread into homes. They had been domesticated. Unlike a steam engine, they did not smoke or belch or drool. Just a tidy steady whirr from a 5-pound hunk. As in factories, these single “home motors” were designed to drive all the machines in one home. The 1916 Hamilton Beach “Home Motor” had a 6-speed rheostat and ran on 110 volts. Designer Donald Norman points out a page from the 1918 Sears, Roebuck and Co. catalog advertising the Home Motor for $8.75 (which is equivalent to about $100 these days). This handy motor would spin your sewing machine. You could also plug it in to the Churn and Mixer Attachment (“for which you will find many uses”), and the Buffer and Grinder Attachments (“will be found very useful in many ways around the home”). The Fan Attachment “can be quickly attached to Home Motor”, as well as Beater Attachment to whip cream and beat eggs.

One hundred years later the electric motor has seeped into ubiquity. There is no longer one home motor in a household, there are dozens of them, and each is nearly invisible. No longer stand-alone devices, motors are now integral parts of many appliances. They actuate our gadgets, acting as the muscles for our artificial selves. They are everywhere. I made an informal census of all the embedded motors I could find in the room I am sitting in while I write:

5 spinning hard disks

3 analog tape recorders

3 cameras (move zoom lenses)

1 video camera

1 watch

1 clock

1 printer

1 scanner (moves scan head)

1 copier

1 fax (moves paper)

1 CD player

1 pump in radiant floor heat

That’s 20 home motors in one room. A factory or office build would have thousands. We don’t think about motors. We are unconscious of them, even though we depend on their work. They rarely fail. We aren’t aware of roads and electricity because they are ubiquitous and usually work. We don’t think of paper and cotton clothing as technology because their reliable presences are everywhere.

In addition to a deep embeddedness, ubiquity also breeds certainty. The advantages of new unknown technology are always disruptive. The first version of an innovation is cumbersome and finicky. A new fangled type of plow, waterwheel, saddle, lamp, phone, or automobile can only offer uncertain advantages for certain trouble. Even after an invention has been perfected elsewhere, when it is first introduced into a new zone or culture it requires the re-education of old habits. The new type of waterwheel may require less water to run, but also require a different type of milling stone that is hard to find, or it may produce a different quality of flour. A new plow may speed tilling but demand planting seed later, thus disrupting ancient traditions. A new kind of automobile may have a longer range but less reliability, or greater efficiency but less range, altering driving and fueling patterns. That is why only a few eager pioneers are inclined to adopt an innovation at first, because the new primarily promises uncertainty and the unknown. As an innovation is perfected, its benefits and education are sorted out and illuminated, it becomes less uncertain, and the technology spreads. That diffusion is neither instantaneous nor even.

In every technology’s lifespan then, there will be a period of “haves” and “have nots.” Clear advantages may flow to the individuals or societies who first risk untried guns, or the alphabet, or electrification, or the internet, over those who do not. The distribution of these advantages may depend on wealth, privilege, or lucky geography as much as desire. This divide between the haves and the have-nots was most recently and most visibly played out at the turn of the last century when the internet blossomed.

The internet was invented in the 1970s and offered very few benefits at first. It was primarily used by its inventors, a very small clique of professionals fluent in programming languages, as a tool to improve itself. From birth the internet was constructed in order to make talking about the idea of an internet more efficient. Likewise, the first ham radio operators primarily broadcasted discussions about ham radio; the early world of CB radio was filled with talk about CB; the first blogs were about blogging; the first several years of twitterings concerned Twitter. By the early 1980s, early adopters who mastered the arcane commands of network protocols in order to find kindred spirits interested in discussing this tool, moved onto the embryonic internet and told their nerdy friends. But the internet was ignored by everyone else as a marginal, teenage male hobby. It was expensive to connect to; it required patience, the ability to type, and a willingness to deal with obscure technical languages; and very few other non-obsessive people were online. Its attraction was lost of most people.

But once the early adaptors modified and perfected the tool to give it pictures and a point and click interface (the web), its advantages became clearer and more desirable. As the great benefits of digital technology became apparent, the question of what to do about the have nots became a bothersome issue. The technology was still expensive, requiring a personal computer, a telephone line, and a monthly subscription fee – but those who adopted it acquired power through knowledge. Professionals and small businesses grasped its potential. The initial users of this empowering technology were – on the global scale – the same set of people who had so many other things: cars, peace, education, jobs, opportunities.

The more evident the power of the internet as an uplifting force became, the more evident the divide between the digital haves and have-nots. One sociological study concluded that there were “two Americas” birthing, as well as two worlds. The citizens of one were poor people who could not afford a computer, and of the other, wealthy individuals equipped with PCs who reaped all the benefits. During the 1990s when technologists such as myself were promoting the advent of the internet, we were often asked what we were going to do about the digital divide? My answer was simple: nothing. We didn’t have to do anything, because the natural history of a technology such as the internet was self-fulfilling.

The have-nots were a temporary imbalance that would be cured (and more so) by market forces. There was so much profit to be made connecting up the rest of the world, and the unconnected were so eager to join, that they were already paying more per minute of telecom connectivity when they could get it. Furthermore, the costs of both computers and connectivity were dropping by the month. At that time most poor in America owned televisions and had monthly cable bills. Owning a computer and internet access was no more expensive and would soon be cheaper than TV. In a decade the outlay would reach a $100 laptop. Within the lifetimes of all born in the last decade, computers of some sort (a connector really) would cost $5.

This was simply a case, as computer scientist Marvin Minsky once put it, of the “haves and have-laters.” The haves (the early adaptors) overpay for crummy early editions of technology that barely works. Their purchase of flaky version 1.0 of new goods finance cheaper and better versions for the have-laters, who will get it for dirt cheap not long afterwards. In essence the “haves” fund the evolution of technology for the have laters. Isn’t that how it should be, that the rich fund the development of cheap technology for the poor?

We saw this “have-later” cycle play out all the more clearly with cell phones. The very first cell phones were larger than bricks, extremely costly, and not very good. I remember an early-adopter techie friend who bought one of the first cell phones; he carried it around in its own dedicated briefcase. I was incredulous that anyone would pay that much for something that seemed more toy than tool. It seemed equally ludicrous at that time to expect that within two decades, the $2,000 devices would be so cheap as to be disposable, so tiny to fit in a shirt pocket, and so ubiquitous that even the street sweepers of India and the rickshaw drivers of China had one. While internet connection for sidewalk sleepers in Calcutta seemed impossible, the long-term trends inherent in technology aim it towards ubiquity. In fact, in many respects the cell coverage of these “later” countries overtook the quality of the older US system so that the cell phone became a case of the “haves” and “have-sooners,” in that the later adopters got the ideal benefits of mobile phones sooner.

The fiercest critics of technology still focus on the ephemeral “have and have-not divide,” but that flimsy border is a distraction. The significant threshold of technological development lies at the boundary between common place and ubiquity, between the have-laters and the “all-have.” When critics asked us champions of the internet what we were going to do about the digital divide, and I said “nothing,” I added a challenge: “If you want to worry about something, don’t worry about the folks who are currently offline. They’ll stampede on faster than you think. Instead you should worry about what we are going to do when everyone is online. When the internet has 6 billion people, and they are all emailing at once; when no one is disconnected and always on day and night, when everything is digital and nothing offline, when the internet is ubiquitous.”

When a technology saturates, or even supersaturates, a culture, it unleashes patterns not seen in lone examples of it. A few isolated manifestations of a technology can reveal its first order effects. But it is not until technology fills a vast, thick interacting pervasion do the second and third order consequences erupt. Don’t worry about those who don’t have a car; worry what happens when everyone has a car. Don’t worry about those families who cannot afford genetic engineering; worry what happens when everyone is engineering. Don’t worry about those who don’t own a personal teleporter; worry what happens when everyone has one. Most of the unintended consequences that so scare us in technology usually arrive in ubiquity.

And most of the good things as well. The trend toward embedded ubiquity is most pronounced in technologies that are open-ended: Communications, computation, socialization, and digitization. And no technology is as open-ended as the mind. The mind is nearly the definition of open-endedness since its limits are imperceptible and unimaginable. We see no closure to the possibilities of an ever-diffusing intelligence. If a human mind can upfold a greater mind, ad infinitum, this upcreation represents the ultimate open-endedness.

The all-pervasiveness of open-ended technologies settle further and further into the matrix of infrastructure. We are busy right now infusing our shoes, clothes, household appliances, vehicles, sports equipment, handhelds, pets, landscape – everything that we touch and touches us – with communication, computation and intelligence. In this ubiquity they open up more new technology, and trigger new levels of consequence.

Because of their open-endedness, the amount of computation and communication that can be crowded into matter and materials, stuffed into the environment, and invested into everything we make seems infinite. Like the magician who keeps pouring water into the bottomless cup, we can keep pouring mind, intelligence, and information into the technium without limit. There is nothing we have invented to date that we’ve said, “it’s smart enough.” In this way the ubiquity of technology is insatiable. It will absorb all mindedness.

The ever-expanding base of our creations works like a vacuum sucking technology toward it. It is constantly stretching the technium towards a pervasive presence. Pulled by open possibilities and pushed by relentless duplication, technology wants ubiquity.